Accelerating route discovery and process optimization

Process development sits at the intersection of molecular discovery and scalable engineering. It is the critical bridge where a synthetic route is transformed into a robust, reproducible process. Every decision, from catalyst loading to temperature, impacts yield, impurity profiles, safety, and cost. However, as process complexity increases, traditional trial-and-error experimentation cannot keep up with tighter specifications and multi-variable interactions.

However, increasing process complexity (more variables, tighter specifications) makes experimentation slow and unpredictable. Traditional trial-and-error cannot keep up.

Machine Learning (ML) and predictive modeling introduce a structured way to explore chemical space. By leveraging Bayesian Optimization (BO), teams can navigate complex reaction spaces with far fewer experiments, turning each run into actionable insight while keeping the chemist’s expertise in the loop.

However, increasing process complexity (more variables, tighter specifications) makes experimentation slow and unpredictable. Traditional trial-and-error cannot keep up.

Machine Learning (ML) and predictive modeling introduce a structured way to explore chemical space. By leveraging Bayesian Optimization (BO), teams can navigate complex reaction spaces with far fewer experiments, turning each run into actionable insight while keeping the chemist’s expertise in the loop.

Key takeaways of this blog

● Closed-loop, adaptive workflows outperform static DoE in complex process development by learning from each experiment and prioritizing high-value runs.

● Probabilistic ML models outcome and uncertainty, helping teams reach robust operating regions with fewer experiments.

● Step economy improves early route selection by favoring shorter, more scalable syntheses that minimize cost and waste at the development stage.

● Hybrid physics + ML models anticipate scale-up risks, allowing teams to validate critical parameters before pilot trials.

● Successful adoption depends on strong initialization, model monitoring, operational guardrails, and expert oversight.

● Probabilistic ML models outcome and uncertainty, helping teams reach robust operating regions with fewer experiments.

● Step economy improves early route selection by favoring shorter, more scalable syntheses that minimize cost and waste at the development stage.

● Hybrid physics + ML models anticipate scale-up risks, allowing teams to validate critical parameters before pilot trials.

● Successful adoption depends on strong initialization, model monitoring, operational guardrails, and expert oversight.

Challenges in Process Development: A Multi-Objective Balancing Act

Process development is inherently multi-objective. Chemists and process engineers must manage common variables like temperature and stoichiometry while balancing trade-offs between yield, cost, and sustainability.

| Common variables | Typical trade-offs | Scalability & Transfer Risks |

|---|---|---|

| Temperature, pressure, catalyst loading, solvent choice, residence time, mixing intensity, pH, stoichiometry, crystallization conditions. | Yield vs. selectivity/impurities; throughput (production rate) vs. thermal control; cost vs. sustainability (E‑factor, kg waste per kg product). | Sensitivity to mass-transfer and heat-removal limits, new impurity pathways, and mass-transfer limitations. |

Having a structured view of these factors helps prioritize which decisions matter most under real constraints.

Why trial-and-error slows progress

The traditional approach, like manual tinkering, has limits when many variables interact. Here are the main reasons.

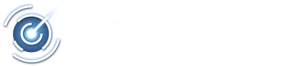

Image by Atinary via AI image tools.

Local optima trap

Making small tweaks improves the process until it seems good enough, but this only climbs the nearest performance peak; the global optimum remains hidden. With BO, experiment planning is data-driven and guided by AI/ML, allowing researchers to more efficiently search through the complex – often constrained – space of possible experiments and find the global optima much faster than with the traditional approaches.

Invisible high-dimensional surfaces

Variables like temperature, solvent, catalyst, time, and mixing interact nonlinearly. The resulting response surface can have folds and multiple peaks in high dimensions, which humans cannot easily visualize.

Poor exploration vs. excessive exploitation

Trial-and-error tends to favor safe, incremental changes (exploitation) while neglecting uncertain regions of the space where larger gains might lie. BO acquisition functions explicitly balance exploration and exploitation, whereas unguided search often misses those unexplored regions.

Scaling costs and reproducibility risk

Chasing small improvements by habit wastes time, reagents, and labor. Hidden constraints or noise (operator variability, measurement error) further degrade results. The outcome is slower progress, more repeated pilot runs, and missed opportunities to increase yield or sustainability.

Making small tweaks improves the process until it seems good enough, but this only climbs the nearest performance peak; the global optimum remains hidden. With BO, experiment planning is data-driven and guided by AI/ML, allowing researchers to more efficiently search through the complex – often constrained – space of possible experiments and find the global optima much faster than with the traditional approaches.

Invisible high-dimensional surfaces

Variables like temperature, solvent, catalyst, time, and mixing interact nonlinearly. The resulting response surface can have folds and multiple peaks in high dimensions, which humans cannot easily visualize.

Poor exploration vs. excessive exploitation

Trial-and-error tends to favor safe, incremental changes (exploitation) while neglecting uncertain regions of the space where larger gains might lie. BO acquisition functions explicitly balance exploration and exploitation, whereas unguided search often misses those unexplored regions.

Scaling costs and reproducibility risk

Chasing small improvements by habit wastes time, reagents, and labor. Hidden constraints or noise (operator variability, measurement error) further degrade results. The outcome is slower progress, more repeated pilot runs, and missed opportunities to increase yield or sustainability.

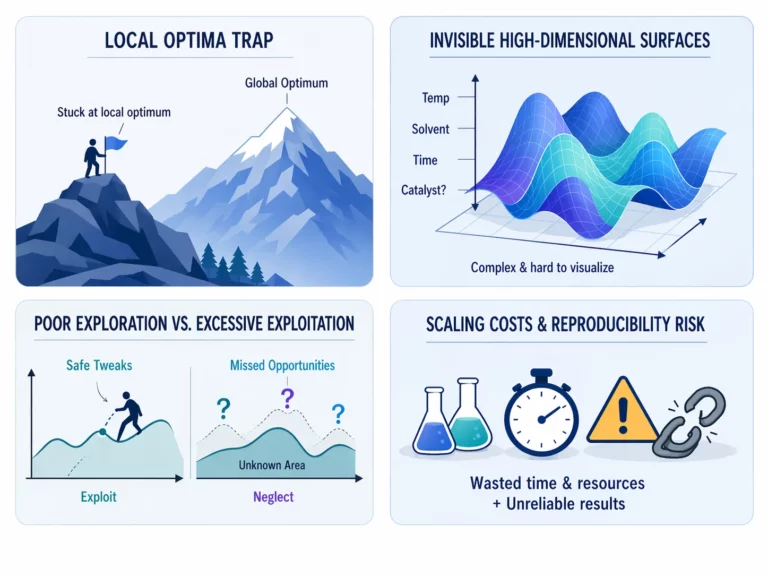

Why traditional optimization struggles at scale

Standard strategies like one-factor-at-a-time (OFAT) or static Design of Experiments (DoE) are effective for low-dimensional problems. However, they struggle to adapt when new information emerges

Closed-loop (adaptive) workflows, like those powered by SDLabs, continuously update decisions based on incoming results. This dynamic approach maximizes learning from every run. Each experiment informs the next, enabling faster convergence and more efficient exploration of large, complex spaces. A critical advantage is when materials are costly or time is limited.

Closed-loop (adaptive) workflows, like those powered by SDLabs, continuously update decisions based on incoming results. This dynamic approach maximizes learning from every run. Each experiment informs the next, enabling faster convergence and more efficient exploration of large, complex spaces. A critical advantage is when materials are costly or time is limited.

Image by Atinary via AI image tools.

ML for route selection and the step economy

Early-stage route selection establishes the foundation for scale-up. Teams generate several candidate routes and must quickly identify the most practical options. ML helps teams rank candidate routes by balancing technical viability with cost.

Step economy as a decision framework for route selection

Step economy is a core principle here. It is also a critical component of green chemistry. By minimizing the total number of steps required to create a target molecule, labs reduce waste, labor, time, and costs associated with extensive purification steps, reagents, and solvents. ML models help prioritize chemically robust and scalable syntheses by providing the essential stepping stones for successful technology transfer. They achieve this by analyzing:

● Route Metrics: Step count, overall yield, and E-factor.

● Resource Optimization: Reagent cost and market availability.

● Safety & Regulatory: Identifying hazardous steps and unit operation complexity.

● Route Metrics: Step count, overall yield, and E-factor.

● Resource Optimization: Reagent cost and market availability.

● Safety & Regulatory: Identifying hazardous steps and unit operation complexity.

Predictive process design and early risk detection

Before building a pilot plant, predictive surrogate models can identify Critical Process Parameters (CPPs) and flag risks like thermal runaway or impurity formation.

Failure modes

Models alert when a parameter change might push impurity levels or heat removal beyond acceptable limits.

Sensitivity analysis

Many ML models decompose predictions into contributions from each factor, highlighting which variables most influence yield.

Digital twins

Hybrid models combine first-principles engineering with ML corrections to run virtual experiments in silico.

Using these tools, resources shift from broad troubleshooting to validating specific risks, as will be displayed below.

Failure modes

Models alert when a parameter change might push impurity levels or heat removal beyond acceptable limits.

Sensitivity analysis

Many ML models decompose predictions into contributions from each factor, highlighting which variables most influence yield.

Digital twins

Hybrid models combine first-principles engineering with ML corrections to run virtual experiments in silico.

Using these tools, resources shift from broad troubleshooting to validating specific risks, as will be displayed below.

Atinary Use Case: Accelerating Hydroformylation R&D

In collaboration with a global leader in the fragrance and flavor industry, Atinary’s SDLabs platform was utilized to optimize a complex hydroformylation process. This case study serves as a blueprint for AI-driven process optimization in industrial settings:

● Complex Space Navigation: The platform explored a 7-dimensional search space containing approximately 2.9 billion possible parameter combinations.

● Performance and Precision: Optimal conditions were identified in just 88 experiments, achieving up to 30x improved performance while sustaining high conversion and selectivity.

● Sustainability and Innovation: The campaign resulted in a 10x to 30x reduction in Rhodium consumption and a 50% faster reaction time, delivering a significantly greener chemical process.

● Strategic Impact: By reducing catalyst cost contributions by over 95%, this project demonstrated the SDLabs platform as an effective tool for accelerating R&D and enabling greener industrial chemistry.

● Complex Space Navigation: The platform explored a 7-dimensional search space containing approximately 2.9 billion possible parameter combinations.

● Performance and Precision: Optimal conditions were identified in just 88 experiments, achieving up to 30x improved performance while sustaining high conversion and selectivity.

● Sustainability and Innovation: The campaign resulted in a 10x to 30x reduction in Rhodium consumption and a 50% faster reaction time, delivering a significantly greener chemical process.

● Strategic Impact: By reducing catalyst cost contributions by over 95%, this project demonstrated the SDLabs platform as an effective tool for accelerating R&D and enabling greener industrial chemistry.

Use Case with ETH Zurich’s SwissCat+: 100 Years of Catalyst Research in 6 Weeks

Our collaboration with ETH Zurich’s SwissCat+ hub illustrates the transformative potential of Self-Driving Labs® in catalyst discovery. The team sought an optimal catalyst for converting carbon dioxide (CO2) into renewable methanol.

● Accelerated Discovery: Using Bayesian Optimization (BO) on the SDLabs platform, the team successfully navigated a parameter space of 20 million combinations.

● Unprecedented Speed: Starting from scratch with no prior data, researchers reproduced 100 years of traditional research results in just 6 weeks.

● Efficiency Gains: This approach was more than 1,000 times faster than conventional manual methods.

● Optimized Outcomes: The final iteration achieved maximum performance while reducing unwanted by-products and metal costs.

Here you can see a global image for this achievement.

● Accelerated Discovery: Using Bayesian Optimization (BO) on the SDLabs platform, the team successfully navigated a parameter space of 20 million combinations.

● Unprecedented Speed: Starting from scratch with no prior data, researchers reproduced 100 years of traditional research results in just 6 weeks.

● Efficiency Gains: This approach was more than 1,000 times faster than conventional manual methods.

● Optimized Outcomes: The final iteration achieved maximum performance while reducing unwanted by-products and metal costs.

Here you can see a global image for this achievement.

Adapted from a figure in Ramirez et al. Chem Catalysis. 2024. Graphical result of each iterative process as conducted by SwissCAT+ in just 6 weeks, in contrast to the decades of catalyst R&D in CO2 to methanol conversion.

AI-Driven Process Robustness and Scalability

Scaling from bench to plant introduces new variables like different mixing, heat removal, and time scales. AI can help translate lab results into robust development plans by:

Parameter mapping

Understanding how reaction kinetics respond to changing physical environments.

Equipment constraints

Constrained optimization algorithms incorporate physical limits like max flow rates or heat exchange capacity to ensure recommended conditions are actually feasible in the pilot/plant context.

Continuous flow chemistry

Suggesting continuous flow configurations to improve thermal control and reduce hotspots during translation to larger scales.

Smart experiment planning

Instead of brute-force pilot DoE, ML models prioritize experiments that close the largest uncertainty gaps in scale-up, ensuring development campaigns focus only on the riskiest conditions.

Parameter mapping

Understanding how reaction kinetics respond to changing physical environments.

Equipment constraints

Constrained optimization algorithms incorporate physical limits like max flow rates or heat exchange capacity to ensure recommended conditions are actually feasible in the pilot/plant context.

Continuous flow chemistry

Suggesting continuous flow configurations to improve thermal control and reduce hotspots during translation to larger scales.

Smart experiment planning

Instead of brute-force pilot DoE, ML models prioritize experiments that close the largest uncertainty gaps in scale-up, ensuring development campaigns focus only on the riskiest conditions.

Towards a New Standard in R&D: Exponential Science

Atinary’s Co-Founders, Dr. Hermann Tribukait and Dr. Loïc Roch, introduced the term Self-Driving Labs® in 2017 to describe this shift toward vertically integrated, autonomous discovery. Adaptive learning turns each experiment into actionable knowledge, guiding teams toward better routes and conditions faster.

When processes are complex and sustainability is critical, these tools provide clarity: they reveal hidden trade-offs, quantify confidence, and enable smarter decision-making. The result is a development cycle that is faster, safer, and more data-driven – leveraging AI to accelerate progress while keeping scientists in control. By treating every experiment as an input to a compounding data flywheel, we enable laboratories to move beyond isolated wins and toward a constant stream of Exponential Science.

Atinary applies adaptive experimentation, uncertainty-aware optimization, and human-in-the-loop workflows aligned with the practices outlined here.

By focusing on high-fidelity data at the R&D scale, we ensure that the transition from benchtop to production is a calculated step rather than a leap of faith.

If you are curious whether this work approach fits your process development challenges, you can share a few details about your project and explore potential collaboration with our scientific team.

When processes are complex and sustainability is critical, these tools provide clarity: they reveal hidden trade-offs, quantify confidence, and enable smarter decision-making. The result is a development cycle that is faster, safer, and more data-driven – leveraging AI to accelerate progress while keeping scientists in control. By treating every experiment as an input to a compounding data flywheel, we enable laboratories to move beyond isolated wins and toward a constant stream of Exponential Science.

Atinary applies adaptive experimentation, uncertainty-aware optimization, and human-in-the-loop workflows aligned with the practices outlined here.

By focusing on high-fidelity data at the R&D scale, we ensure that the transition from benchtop to production is a calculated step rather than a leap of faith.

If you are curious whether this work approach fits your process development challenges, you can share a few details about your project and explore potential collaboration with our scientific team.

This post was developed with contributions from Dr. Mohammed Azzouzi (Applications Engineer) and Lucien Brey (ML Scientist) at Atinary.